Mechanical Search Algorithm

A General Framework for Mechanical Search and Grasping

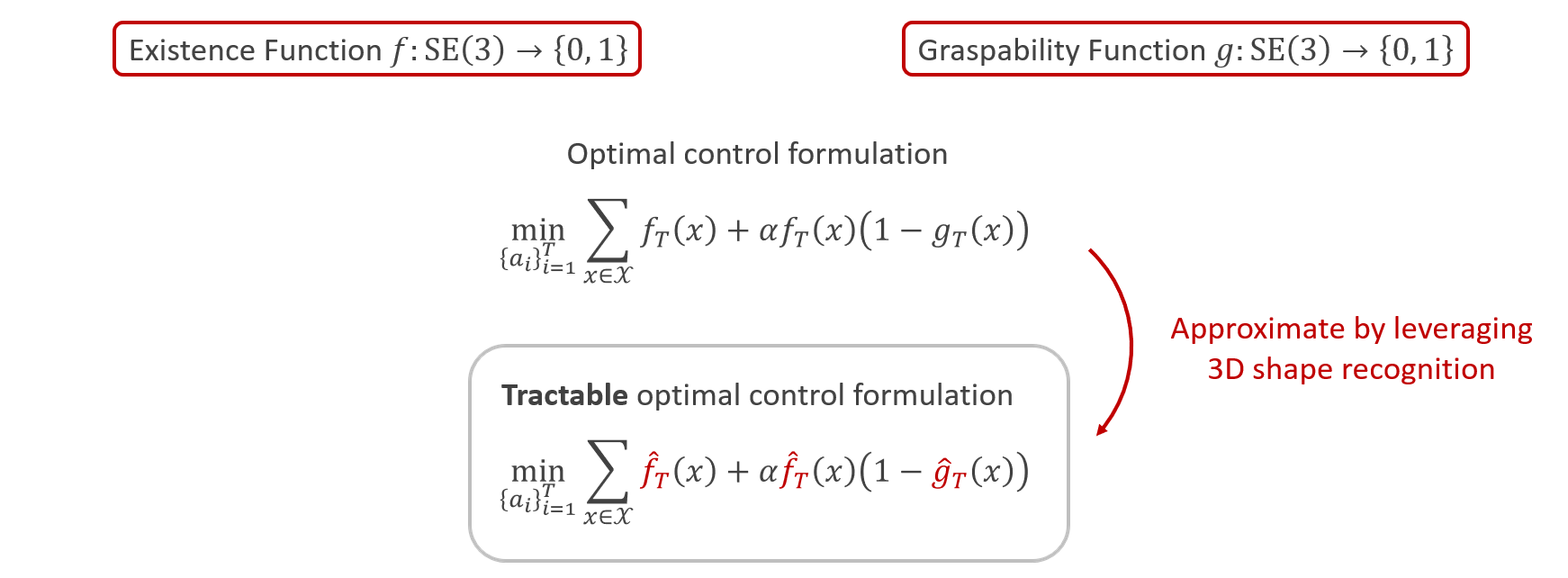

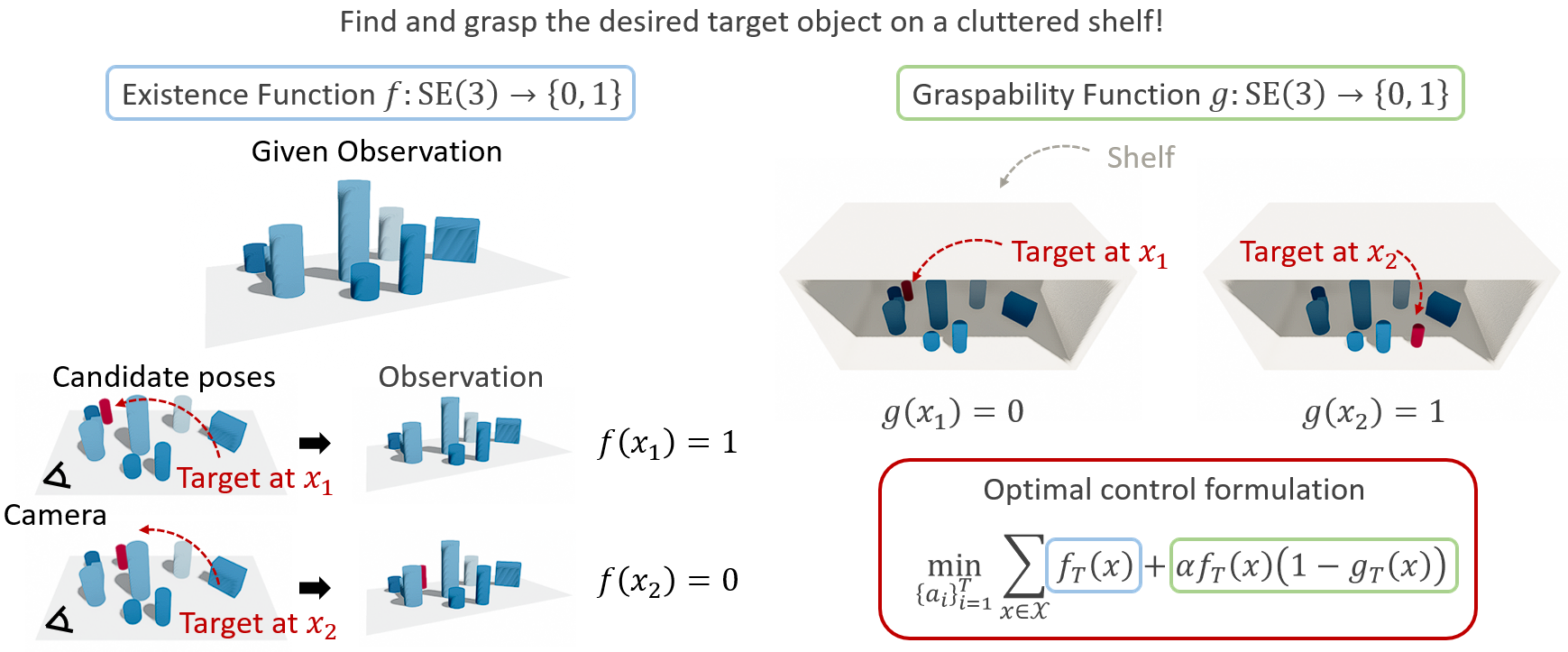

In this paper, we propose a novel optimal control framework for mechanical search with practical algorithms that leverage 3D reconstruction models. First, we introduce two indicator functions (denoting the target's candidate pose by \(x\in \mathrm{SE}(3)\)): (i) an existence function \(f(x)\) that indicates if the target can be present at \(x\) and (ii) a graspability function \(g(x)\) that indicates if the target at \(x\) is graspable. The objective then becomes to rearrange surrounding objects until only one existable and graspable pose \(x^*\) remains, i.e., there exists a unique \(x^*\in \mathrm{SE}(3)\) such that \(f(x^*)=1\) and \(g(x^*)=1\), which leads to a straightforward definition of a cost function using \(f\) and \(g\).

3D Object Recognition-based Mechanical Search

we leverage a 3D object recognition model to effectively estimate the functions \(f, g\) and their corresponding dynamics models; therefore we formulate a tractable model-based optimal control problem. Specifically, we employ a recent 3D recognition model rooted in superquadric primitives. Notably, the superquadric representation allows for rapid collision checks, depth image rendering, and the utilization of pushing dynamics models, which leads to effective estimations of \(f\) and \(g\) and their dynamics models. To mitigate accumulated estimation errors during optimal control, we adopt the model predictive control with a short time horizon.